Technology

Google TurboQuant: The Breakthrough That Could Make AI 10x Cheaper & Faster

Every so often, a research paper drops that doesn't make headlines the way a flashy product launch does — but quietly rewrites the rules of an entire industry. Today, Google Research published one of those papers. It's called TurboQuant, and if the benchmarks hold up in the real world, it could be one of the most consequential AI efficiency breakthroughs in years.

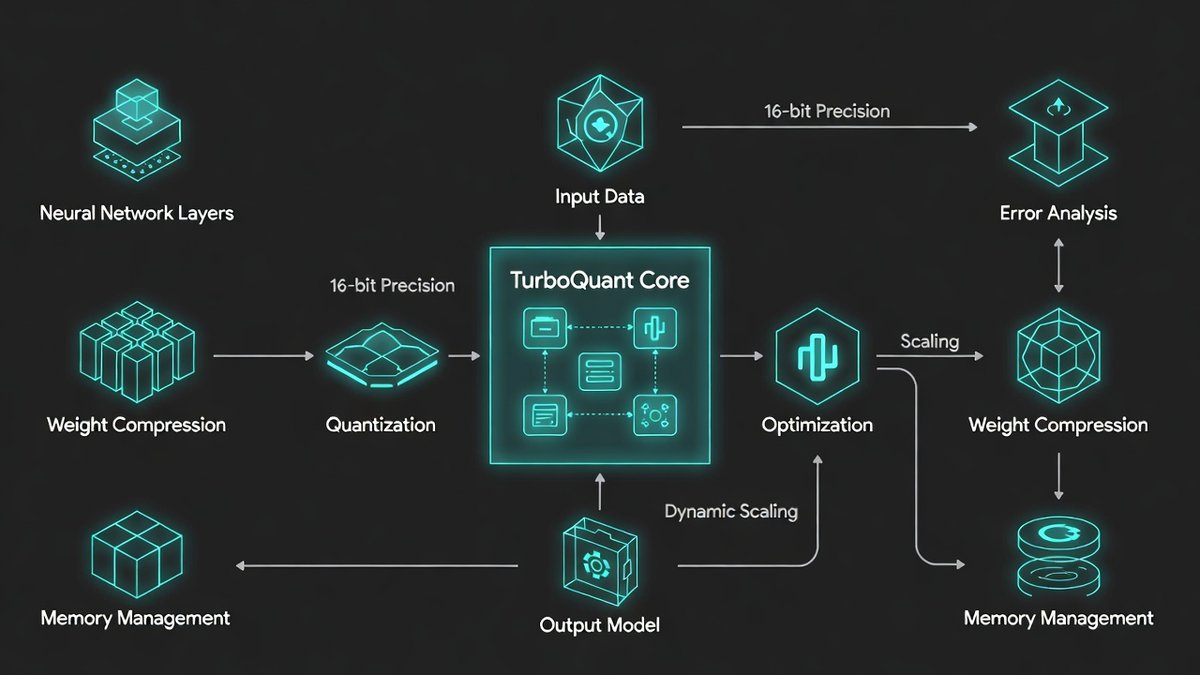

Google Research described the technology as a novel way to shrink AI's working memory without impacting performance. The compression method uses a form of vector quantization to clear cache bottlenecks in AI processing, and would essentially allow AI to remember more information while taking up less space and maintaining accuracy, according to the researchers. Google DeepMind

At digital8hub.com, we cover AI not just when it's exciting — but when it's important. TurboQuant is both.

The Problem TurboQuant Solves

To understand why TurboQuant matters, you need to understand one of the most persistent and expensive problems in modern AI: the KV cache bottleneck.

When you chat with an LLM, the model doesn't just process your latest message. It keeps a running record of the entire conversation in something called the key-value (KV) cache — the model's short-term memory for your session, a sort of journal tracking all the things that matter in the conversation you're having with your LLM of choice. ResetEra

The problem? As context windows grow, the memory required to store KV caches expands proportionally, consuming GPU memory and slowing inference. Vector quantization has long been used to compress these high-dimensional numerical representations, but the persistent limitation of conventional approaches is that they require storing quantization constants in high precision for every small block of data, adding between one and two extra bits per number — partially defeating the purpose of compression entirely. Bleeping Computer

In simple terms: AI has been spending enormous amounts of expensive memory just to remember what it's talking about. And the bigger the conversation, the more memory it burns.

What TurboQuant Does Differently

Google's TurboQuant uses AI model compression to cut memory use by 6x and boost inference speed 8x without any loss in accuracy. TurboQuant compressed KV caches to 3 bits per value without requiring model retraining or fine-tuning, and without measurable accuracy loss across question answering, code generation, and summarisation tasks. Bleeping Computer

That last point deserves emphasis. No retraining. No accuracy loss. Drop it onto an existing model and it works — immediately. TurboQuant works on existing models without any training or fine-tuning. It can be applied as a post-processing step to models like Gemma, Mistral, and other open-source LLMs. ResetEra

The magic happens through two companion algorithms working in tandem:

PolarQuant converts data vectors from standard Cartesian coordinates into polar coordinates, separating each vector into a radius representing magnitude and a set of angles representing direction. Because the angular distributions are predictable and concentrated, PolarQuant skips the expensive per-block normalisation step that conventional quantisers require — leading to high-quality compression with zero overhead from stored quantisation constants. Screen Rant

QJL (Quantized Johnson-Lindenstrauss) uses a mathematical technique called the Johnson-Lindenstrauss Transform to shrink complex, high-dimensional data while preserving the essential distances and relationships between data points. It reduces each resulting vector number to a single sign bit (+1 or -1), creating a high-speed shorthand that requires zero memory overhead. Yahoo!

Together, the two methods eliminate the "memory tax" that has been silently draining AI infrastructure budgets for years.

The Numbers Are Staggering

TurboQuant delivers 6x+ memory compression, 8x attention speedup on H100 GPUs, and 3-bit zero-loss compression. Anime Mojo

In nearest neighbour search tasks, TurboQuant outperformed standard Product Quantisation and RabitQ in recall while reducing indexing time to virtually zero — just 0.0013 seconds for 1536-dimensional vectors. Because TurboQuant is data-oblivious, it eliminates the need for the time-consuming k-means training phase required by conventional methods, which can take hundreds of seconds for large datasets. TechCrunch

For the developers and infrastructure teams running AI at scale, these numbers represent a direct and dramatic reduction in operating costs. Fewer GPUs needed. Smaller memory footprints. Faster responses. Lower bills.

Google's DeepSeek Moment?

The reaction from the tech community has been immediate and electric. The original announcement from Google Research generated over 7.7 million views on X within 24 hours, signalling that the industry was hungry for a solution to the memory crisis. Some, like Cloudflare CEO Matthew Prince, are calling this Google's DeepSeek moment — a reference to the efficiency gains driven by the Chinese AI model, which was trained at a fraction of the cost of its rivals while remaining competitive on results. Google DeepMind

The Silicon Valley comparison that went viral? Fans of the HBO series Silicon Valley were quick to draw parallels with the show's fictional compression algorithm, "Pied Piper" — and the jokes flooded social media within hours of the paper dropping.

Within 24 hours of the release, community members began porting the algorithm to popular local AI libraries like MLX for Apple Silicon and llama.cpp. Technical analyst Prince Canuma shared one of the most compelling early benchmarks, implementing TurboQuant in MLX to test a large open-source model. Google DeepMind

Wall Street Is Already Reacting

The financial implications of TurboQuant extend beyond the AI lab — all the way to the stock market. Following the announcement, analysts observed a downward trend in the stock prices of major memory suppliers. The market's reaction reflects a realisation that if AI giants can compress their memory requirements by a factor of six through software alone, the insatiable demand for High Bandwidth Memory may be tempered by algorithmic efficiency. Cyber Security News

Google's new algorithms are impacting the memory and storage sector, causing notable stock declines across memory suppliers including Micron, Seagate, and SanDisk. Google DeepMind

This is the classic dynamic of software efficiency eating hardware demand — the same phenomenon that saw cloud computing reduce the need for on-premise servers. If TurboQuant scales as promised, the companies betting on explosive AI memory demand may need to revise their projections significantly.

What Comes Next?

It's worth noting that TurboQuant hasn't yet been deployed broadly — it's still a lab breakthrough at this time. TurboQuant could lead to efficiency gains and systems that require less memory during inference, but it wouldn't necessarily solve the wider RAM shortages driven by AI, given that it only targets inference memory, not training — the latter of which continues to require massive amounts of RAM. Google DeepMind

The thing to watch is whether any of the major open-source serving frameworks merge this. The techniques are described as "exceptionally efficient to implement" with "negligible runtime overhead," and the early independent implementations suggest that's true. Whether it becomes a checkbox in Ollama or a flag in llama.cpp is the real question. ResetEra

Google Research plans to present their findings at the ICLR 2026 conference next month in Rio de Janeiro, along with companion paper PolarQuant at AISTATS 2026 in Tangier, Morocco. Google DeepMind

Open-source code is widely expected around Q2 2026 — at which point TurboQuant could rapidly become a standard component of AI inference pipelines worldwide.

The AI race has always been about who has the biggest models and the most compute. TurboQuant suggests the next frontier is smarter, leaner, more efficient systems — and Google just fired the starting gun.

Stay across every breakthrough in AI and technology at digital8hub.com — your trusted source for the innovations reshaping our digital world.

Comments (0)

Please log in to comment

No comments yet. Be the first!